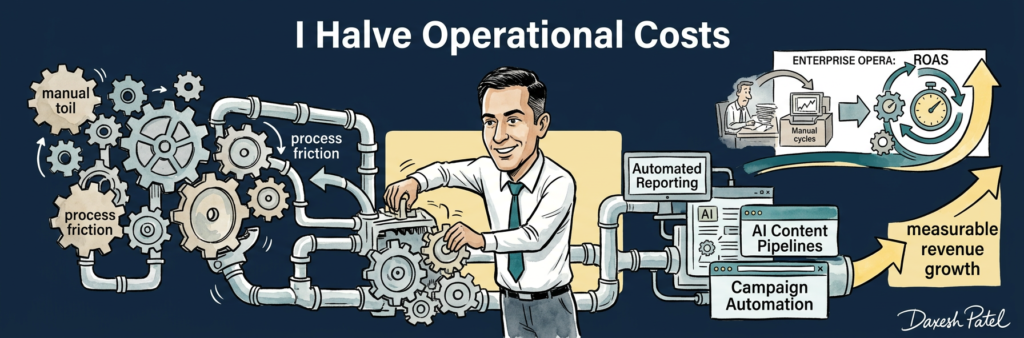

I believe most firms are overspending on AI because they are buying software before they have diagnosed operational friction. In 2026, that mistake is getting more expensive, not less, because vendors are selling breadth while commercial performance still depends on fixing a few bottlenecks: reporting lag, content throughput, campaign execution speed, and decision latency.

The prevailing consensus says leaders should start with a broad AI platform and “upskill the team” around it. I think that framing produces weak outcomes because it treats AI as a category purchase rather than an operating decision. I have seen teams spend six figures on tooling while weekly reporting still takes days, paid media changes still queue behind manual checks, and content still stalls in review loops. The mechanism is simple: automation value compounds only when it is attached to revenue-critical workflows.

Why the Consensus Is Incomplete

Most leaders are making the wrong decision because the market incentives push them there. Vendors sell horizontal capability; operators need vertical commercial impact. Boards ask whether the business has an AI plan; they ask far less often which manual process is suppressing margin this quarter. That gap matters. When reporting, content production, and campaign execution stay manual, teams carry hidden cost in salary time, slower optimisation, and missed demand capture. I have managed more than £30M in multi-market budgets, and the same pattern appears at every scale: the cost is not just inefficiency, it is slower revenue.

Evidence the Market Is Missing

>123% ROAS uplift: When I paired automation with strict commercial guardrails in paid media, return improved materially. Implication: the gain came from faster, more consistent execution, not from buying more tools. Decision change: fund workflow automation inside channel operations before funding another platform layer.

>£30M budget complexity: Large budget management taught me that scale does not create different bottlenecks, only more expensive ones. Implication: SMBs should not assume they need enterprise-grade sprawl to get value. Decision change: start with one high-friction process tied to revenue and prove speed, accuracy, and control there.

Manual workload reductions across reporting, content generation, and campaign execution: I have repeatedly used automation to remove repetitive labour across those three areas. Implication: the real payback starts where human effort is frequent, rules-based, and time-sensitive. Decision change: prioritise recurring workflows over one-off AI experiments.

Multi-market execution: The more regions, channels, and stakeholders involved, the more damaging process inconsistency becomes. Implication: governance is not a compliance add-on; it is what makes automation commercially safe. Decision change: define approval logic, audit trails, and data ownership before scaling usage.

Strategic implication table

| Conventional Leader Response | Research Finding | Strategic Risk of Inaction | Better Approach |

|---|---|---|---|

| Buy a broad AI suite first | ROAS improved when automation sat inside execution workflows | Tool cost without operating leverage | Automate one revenue bottleneck first |

| Treat reporting as admin | Reporting automation cuts manual drag | Slower decisions and weaker optimisation | Build automated reporting as operating infrastructure |

| Pilot content AI in isolation | Content pipelines work when linked to approvals and demand capture | More output, little commercial gain | Connect content automation to SEO, CRO, and media |

| Add governance later | Multi-market scale amplifies inconsistency | Security, compliance, and brand risk | Set audit trails, permissions, and data rules upfront |

Decision framework

Automated reporting, bid-change workflows

Integrated campaign automation with CRM and approvals

Standalone prompt tools, internal assistants

Suite-wide rollouts before process redesign

What leading teams understand earlier

I see the best teams make four decisions earlier than everyone else. First, they treat reporting latency as a growth issue; that creates an advantage because faster visibility shortens the optimisation cycle. Second, they standardise data inputs before they automate; that prevents bad outputs at scale. Third, they put one accountable owner over marketing automation, content operations, and channel execution; that removes hand-off delays. Fourth, they define governance early; that lets them move faster later because approvals, permissions, and auditability are already built in.

Five leadership recommendations

Fund workflow redesign before software expansion. Most leaders delay because software feels tangible. Waiting another quarter preserves friction and compounds labour cost. Early proof is reduced turnaround time on recurring tasks.

Start with reporting. Leaders delay because it looks non-strategic. Waiting means every channel decision is made slower than it should be. Early proof is fewer manual interventions and faster weekly reviews.

Automate content only when distribution is connected. Delay happens when teams separate production from demand. Waiting creates more assets without more revenue. Early proof is faster publishing tied to search, paid, or CRM performance.

Build governance before scale. Leaders delay because it feels procedural. Waiting raises vendor, privacy, and brand risk. Early proof is clear ownership, approval logs, and restricted data access.

Assign one operator, not a committee. Delay happens because AI gets parked in cross-functional forums. Waiting kills speed. Early proof is a live pilot moving from test to routine use inside one quarter.

The central argument is straightforward: AI should be funded as an operating lever, not as a technology badge. The firms getting real value in 2026 are not the ones with the biggest stack; they are the ones removing the most friction from revenue-producing work.

I know why this is hard. Budget lines, internal politics, security reviews, and vendor pressure all push leaders towards broad purchases and vague plans. But the commercial answer is still the same: diagnose the bottleneck, automate the workflow, measure the margin effect, then scale.

If your AI plan does not change cycle time, it is not a growth plan.

FAQ

- How should an SMB decide between a custom AI workflow and an off-the-shelf suite?

- I start with the workflow, not the product. If the bottleneck is specific, repeated, and tied to revenue, I back a narrower build or integration before I buy broad suite capability.

- What is the best first AI automation use case in marketing?

- Automated reporting is usually first because it improves decision speed across every channel. It is easier to govern, easier to measure, and it exposes the next bottleneck quickly.

- Does AI content automation actually drive revenue?

- Only when it is connected to distribution and conversion. More content alone is not a commercial result; faster production linked to SEO, paid media, and CRO is.

- Are enterprise AI platforms overkill for smaller firms?

- Often, yes. I have seen smaller firms buy enterprise breadth before they have the process discipline to use it well, which creates cost without leverage.

- What governance matters most for AI automation in a smaller business?

- Data ownership, permissions, approval logs, and vendor risk checks matter first. If those are weak, scale creates exposure faster than value.

- Is the common advice to “train the team first” wrong?

- On its own, yes. Training without a defined workflow simply teaches people to experiment; it does not create operational gain.